Introduction

This series (introduced here) is about exploring solutions to disinformation. We face a difficult challenge. It requires multiple, coherent plans mutually reinforcing each other in a virtuous cycle. We can “flatten the curve” of misinformation, but first we need to understand our options.

The first post focused on media literacy. You can check it out here.

This month, I’m looking at the role of fact-checking.

* Because the content is a bit longer than usual, your email provider may cut you off somewhere down below. Click through to the full post by clicking on the title above.

A noble profession gets a Nobel nomination

It says something about the state of the world in 2021 that fact-checkers were nominated for a Nobel Peace Prize. The nomination was for the International Fact-Checking Network (IFCN) at the Poynter Institute, which sets standards and promotes collaboration within the industry.

In response Baybars Örsek, Director of the IFCN, said, "Fact-checkers are working worldwide, often under threat or attack, to provide quality information and combat misinformation, often made deliberately, that pollutes society or inhibits freedom."

As we discussed previously with media literacy, fact-checking should be part of the bedrock strategy to suppress disinformation.

In recent years fact-checking evolved from a fairly remote field within journalism to having a central role in anti-disinformation efforts. Facebook and others work with a wide range of fact-checkers to verify claims circulating within their platforms. This collaboration should be heralded. It is often derided. But turning to third party experts is no bad thing, after all. And having a number of different fact-checkers with different perspectives means we get a better chance of understanding the full picture.

But if it was the case that fact-checking worked so well, we’d have eliminated disinformation by now. That’s evidently not the case. So before going into the weaknesses, as well as the strengths, of fact-checking as a tactic, let’s understand it more.

The role of fact-checkers

Traditionally, fact-checking was an internal part of the newsroom. Ideally, this team would spend a long time in painstaking research to verify each claim made in an article by a colleague. This could mean everything from making sure the reporter got the dates and times of events right, the spelling of every name right, and of course further investigation to verify more detailed claims within the piece.

Sadly, most publications struggle to find the resources for a dedicated team like this nowadays, and much of this work is now performed by an editor or colleague. That’s a story for another post, which we’ll come back to, but it’s part of the story of the rise of social media. Just as publishers felt the squeeze financially and cut back, social platforms rode a wave to almost unimaginable wealth.

Today, many within the fact-checking community provide their service to social platforms. Instead of using their skills to interrogate the work of colleagues, they spend hours sifting through claims made on social media to ensure a healthier ecosystem of information. Fact-checkers are bringing their skills to the 21st century.

How platforms utilise fact-checkers

Platforms use the work of fact-checkers in various ways. The general outline is this: either through user reports or internal technology, a flagging queue is created for review of questionable content. This can be automatically ranked by the most urgent, dangerous or risky. Some of these will be handled by an internal team of content moderators (more on them in another post), but some of them will go to the third party fact-checkers for review.

When the fact-checker reaches its conclusion, there are a range of options for the platform. If the post is deemed false, it may be labelled with a warning. For Instagram that could look like this:

Sometimes, platforms will remove the offending content if it breaks terms and conditions. This is what happened to the notorious video “Plandemic” in the summer of 2020. Full of baseless conspiracies about COVID-19 and vaccines, the video went viral over a few days and gained millions of views across multiple platforms. Eventually, it was removed altogether by the mainstream platforms, though they struggled to keep copies of it from being reuploaded.

If the content is rated as “borderline” platforms might cease recommending it. According to Facebook, "This significantly reduces the number of people who see it."

Aside from labels, take downs, and ceasing recommendation, the platform might consider issuing a flag or strike to the account owner. Multiple strikes could mean more punishment, including removal of the account entirely.

Of course, fact-checkers don’t just sift through a queue provided by platforms. They have their own methods of detecting new claims to check. And they have core methods for doing the research itself.

Establishing research standards

To build trust, many fact-checkers disclose their methods. Transparency around standards is a critical way to gain trust, as is the explanation within a fact-check on how the journalist came to the final conclusion.

Africa Check, a non-profit established in Senegal in 2012, and now with offices in Nigeria, Kenya and South Africa, is one good example of this. They have a page outlining their Code of Principles, a page outlining how they fact-check, and a page outlining explanations for all their ratings. Politifact, which like the IFCN is also operated by Poynter, has a similar page outlining principles for their famous “Truth-o-meter”.

The IFCN has played a big role in ensuring a global criteria for standards within the industry. There are five key principles that organisations must abide by to join the network:

A commitment to non-partisanship and fairness.

A commitment to standards and transparency of sources.

A commitment to transparency of funding and organisation.

A commitment to standards and transparency of methodology.

A commitment to open and honest corrections policy.

Each one of these has sub-principles which goes into more detail.

A related and growing field within fact-checking is social journalism and verification.

As mentioned last time, First Draft is a pioneer with media literacy and other initiatives related to disinformation. Their SHEEP acronym serves as a good guide before sharing content, but works equally well as an insight to the verification process:

S = Source. Who is this person who seems to own this content?

H = History. Does the source have an agenda or anything else worth noting in their other posts?

E = Evidence. Does the claim they're making have any reliable evidence from elsewhere?

E = Emotion. Check for sensational, inflammatory and divisive language.

P = Pictures. How is the source using images to get attention?

This is where I have to declare a bit of self-interest: eight years of my career were spent in Storyful. Set up in Dublin, Ireland, in 2009 by Mark Little, the Storyful mission was never defined as “fact-checking” per se, but the principles of investigation were similar. For us, the focus was on verification of content from social media and acquiring permission from owners of said content for broadcast.

As described by then-editor Malachy Browne in a 2012 blog post (archived here), the verification process was stringent. Finding more information about the source of the content was key. As Malachy outlined, we’d always ask ourselves questions like these:

Where is this account registered and where has the uploader been based, judging by their history?

Are there other accounts – Twitter, Facebook, a blog or website – affiliated with this uploader? What information do they bear to indicate recent location, activity, reliability, bias, agenda?

How long have these accounts been in existence? How active are they?

Do they write in slang or dialect that is identifiable in the video’s narration?

Can we find WHOIS information for an affiliated website?

Is the person listed in local directories? Do their online social circles indicate they are close to this story/location?

Does the uploader ‘scrape’ videos from news organisations and other YouTube accounts, or do they upload solely user-generated content?

Are the videos on this account of a consistent quality?

Are video descriptions consistent and mostly from a specific location? Are they dated? Do they have file extensions such as .AVI or .MP4 in the video title?

Are we familiar with this account – has their content and reportage been reliable in the past?

Starting with the source, we’d work outwards. Once we established confidence that the person was the original holder of the camera, we’d do a bit more digging into the location and date. Piecing all this together would help us when phoning up the source, so we could ask informed questions to put the verification of content beyond doubt.

Verifying the location was critical. Bellingcat, an excellent initiative from Eliot Higgins with similar methods, has posted an introductory guide for anyone looking to learn these skills. For example, often the nature of shadows gives an incredible amount of insight to the discerning researcher.

There are a number of guides that provide thought leadership in this area. Perhaps the most influential in relation to disinformation is the Verification Handbook For Disinformation And Media Manipulation, edited by Craig Silverman of Buzzfeed. Now in its third edition, which is available online for free, it dives into two key areas: how to investigate “bad actors” and content, and how to investigate what’s happening on social platforms. The guide is packed with insights from industry experts and case studies, and is well worth the time of anyone who wants to understand how to verify content online.

Another useful guide is the The Media Manipulation Casebook from the Technology and Social Change project led by Joan Donovan. Also a free resource, anyone can dig in here to get insights to how bad actors manipulate social media. There’s a mix of easy to read case studies and deep dive research which would be useful to anyone at any level.

Sometimes organisations reveal some of their own tips and tricks. A good example is a recent AFP series on fact-checking and misinformation posted on Facebook Watch.

The field pioneered by Bellingcat has taken to the term OSINT - open source intelligence. My former colleague from Storyful Eoghan Sweeney has a site, OSINT Essentials, which provides an insight to the tools, methods and practices within the community.

The field continues to evolve. Amnesty International has developed a course on open source investigations for human rights. Reuters has developed a course called Identifying and Tackling Manipulated Media.

Innovations

For all the evolution described above, the work of fact-checking, debunking and verification is based on old-fashioned journalistic values. Interrogating a source, lateral reading to understand the full context, and checking, checking, checking.

But there are innovations in the field. FullFact, based in the UK, is not your typical fact-checker. A lot of their work can be described as such, but they also have a team looking into artificial intelligence and machine learning. One of their teams is investigating the possibility of automating fact-checking, although even they note, “We are not attempting to replace fact-checkers with technology, but to empower fact-checkers with the best tools. We expect most fact-checks to be completed by a highly trained human, but we want to use technology to help:

Know the most important thing to be fact-checking each day

Know when someone repeats something they already know to be false

Check things in as close to real-time as possible”.

There are a number of other organisations looking at this. The Duke Reporters’ Lab has been working on ClaimReview to collect fact-checks from multiple sources and provide that data for automation.

There are global collaborative projects like the IFCN’s COVID-19 misinformation hub. The CoronaVirusFacts / DatosCoronaVirus Alliance Database gathers all the falsehoods identified by fact-checkers in more than 70 countries and over 40 languages. The IFCN says, “This international collaboration has allowed our members to respond faster and reach larger audiences”.

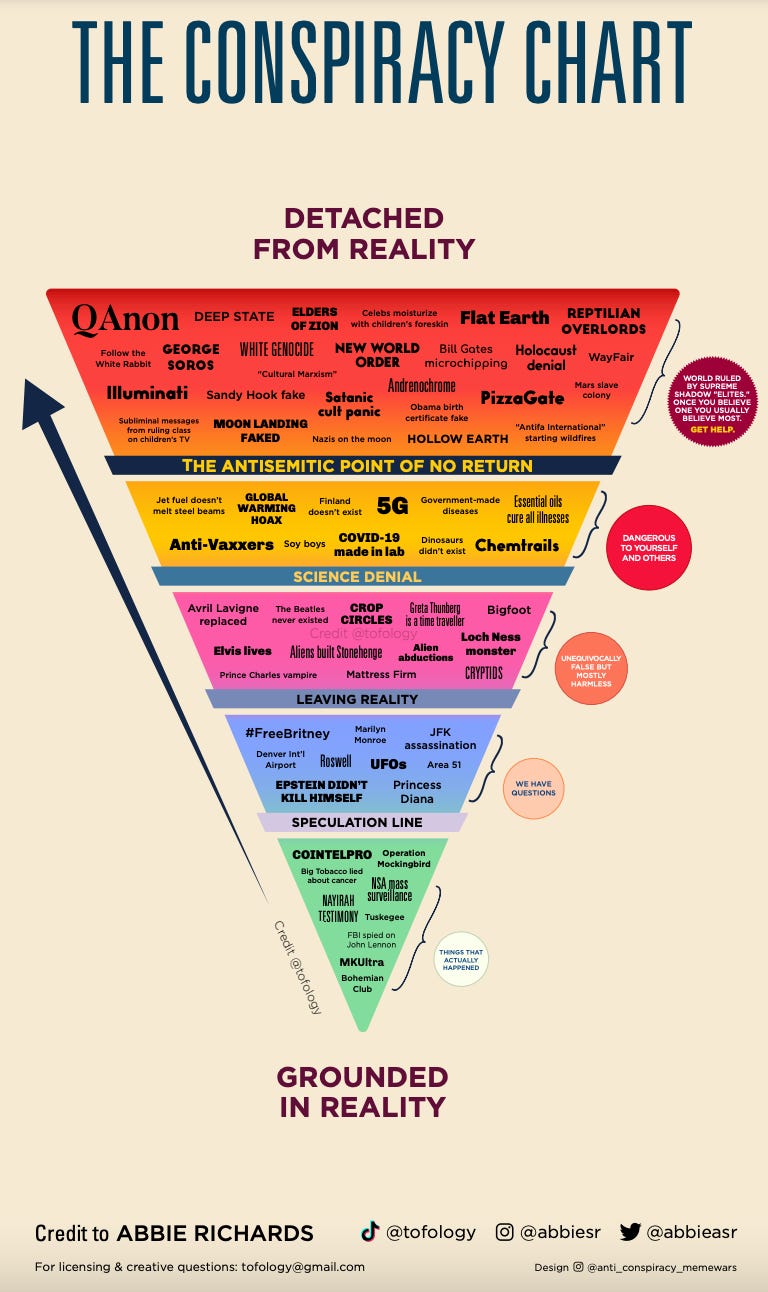

There’s also room for new styles and communication techniques. There is perhaps no one more pioneering here than Abbie Richards. She uses TikTok, as well as other platforms, to debunk conspiracy theories and misinformation. She has over 200,000 followers on TikTok and is playing a big role in helping younger people in particular to understand that not everything they see online can be believed.

And she puts in the hard work. I asked Abbie how long each video takes her, from inception to publication, and she said it can vary from two days to three weeks. For one post she described how she spoke with more than half a dozen extremism and disinformation experts to ensure she was technically accurate with every little detail.

Although she couldn’t be defined as a traditional fact-checker, her work serves a similar purpose in helping to combat viral falsehoods online. Not all innovation has to involve machine learning. She has created an inverted pyramid describing various falsehoods which she calls The Conspiracy Chart:

Even for seasoned professionals like myself, such an eye-catching innovation is a breath of fresh air. When I asked Abbie about the impact she hopes her work will have, she said,

“I make content that I hope will help people to better understand the chaos of our world. There are lots of new challenges in the 21st century - and plenty of old one too. I want my content to reach people who feel confused and maybe help them feel like they understand the world of information a little bit better… We have to produce accurate, informative, engaging content that finds [people] and to do that you have to understand the digital ecosystems you’re trying to reach. There are so many creators from every background you can imagine who understand their platforms and audiences. Let them help you make communication content that actually reaches people - especially vulnerable people.”

There are more possible approaches. Twitter has just launched a collaborative experiment called Birdwatch which seeks to build upon the wisdom of crowds in combating misinformation. We’ll come back to this more in an upcoming post on content moderation. But despite early warning signs this could backfire, the approach of sites like Wikipedia and Reddit suggest there could be something in asking citizens to get involved in fact-checking each other. It will have to be done very carefully though.

The Washington Post has developed a “DIY Fact-check” series to help anyone become a fact-checker. The more of this the better if we are going to rely on crowdsourcing debunks for Birdwatch and other initiatives like it.

There are other ways of getting people to take part. For example Africa Check is running a campaign for voluntary Fact-Check Ambassadors to foster a culture of media literacy and exposure to the latest work of fact-checkers:

Danger: imposter fact-checkers

Bad actors can seek to ride on the reputation of fact-checkers, which poses a danger. As Sara Fischer recently reported at Axios, the identities of journalists have been hijacked to spread misinformation.

Perhaps the most famous recent example is from the UK General Election of 2019, when the Conservative party rebranded their Twitter account to “Fact-check UK”. For anyone unaware, the tweets might have been considered to be accurate and nonpartisan. The Turkish government is reportedly launching its own fact-checking platform to counter criticism from the opposition. This could go down a very dark path.

Back in 2017, Jasper Jackson wrote for The Guardian about how a Swedish far-right group set up a fact-checking operation to debunk false information online. Of course, it was just a smokescreen for their propaganda.

Will fact-checking change people’s minds?

In February 1998, Andrew Wakefield was the lead author of a study published by The Lancet. He claimed it raised questions about a link between the MMR vaccine and autism. The study was based on just 12 children. The data was later found to be questionable and a Sunday Times investigation found that he had a serious conflict of interest, having been paid a lot of money (which he had never disclosed) by lawyers looking to make a case against MMR. In its retraction, The Lancet stated, "We wish to make it clear that in this paper no causal link was established between (the) vaccine and autism, as the data were insufficient."

Repeated studies since then have shown there is no link between vaccines and autism, but sadly that hasn’t stopped the growth of the anti-vaccine movement. Most of the people leading this movement stand to gain financially from its continued growth, and, particularly since the COVID outbreak, the network has found more room to grow.

So despite years of proof that vaccines are safe, a lot of people’s minds haven’t been changed. Some people are resolutely “anti-vaxx”, others are “vaccine hesitant”, which is understandable if you’ve ever listened to the rhetoric. The movement is particularly adept at speaking to our deepest emotions and fears. For any parent, the thought that a vaccine could cause injury to their child is enough for them to take pause. Thankfully, there has been some consolidation in vaccine uptake rates globally, but we still have a large societal problem. And one that could meet its apotheosis with the COVID vaccine.

So the question must be asked: will facts change people’s minds? Despite all the standards and procedures mentioned above, and despite all the innovations, is fact-checking doomed to fail?

This raises deep questions about how we know what we know, how we make up our belief system, what communities we identify with. The hope is that simply knowing the facts is enough. That we can rely on reason to get us out of this mess. That we can refute falsehoods and move on with our lives. But humans aren’t as simple as that.

Every day our minds are stimulated to an almost unfathomable degree. Our brain needs to take shortcuts and so we have, as Daniel Kahneman has described, “fast” thinking and “slow” thinking. In this discussion about changing people’s minds, we are often talking about using the “slow” approach, the ability to calmly reflect, reason, interrogate, and decide based upon the facts. But we need to use the “fast” approach throughout the day too. Otherwise we’d never get anything done.

This is where the problem of confirmation bias comes in, where we welcome new information that fits our existing beliefs. In a world of distraction, we are often using “fast” thinking as we move through the day. We reject anything that comes into conflict with our beliefs. It may be well and good - and true - to say that we should all try to encounter material that challenges us and that forces us to rethink our assumptions, but it’s easier said than done.

A feeling is so much stronger than a thought.

Another example where facts seem to struggle against falsehoods is the outcome of the 2020 US election. Despite no evidence whatsoever that voter fraud influenced the result, a startling number of Republicans - both politicians and supporters - believe that it was stolen.

So much of this stemmed from online falsehoods, conspiracy sites, and influencers, (as well as TV) but it reached its offline, real-world culmination in the Capitol riot of January 6, 2021. In the weeks and months leading up to this, CNN’s Donie O’Sullivan (another former colleague from Storyful) went to various MAGA rallies and QAnon events. His documentation of the perspectives of this group is critical in helping us to understand them.

Researcher Kate Starbird has referred to the pro-Trump conspiracy movement as an example of “participatory disinformation”. It’s bottom-up as well as top-down. For many of these people, the mainstream or “lamestream” media is part of an alternate reality. Any attempts at fact-checking that doesn’t correlate with their most far-fetched belief is ridiculed as part of the “mainstream lie”.

That’s exactly what we see in Donie’s reporting. In one case, he spends time with Trump supporters in Minnesota asking what they see on their newsfeeds. One man talks about a viral video allegedly showing Biden asleep during a TV interview. The footage was faked. As Donie shows the man the original video, and he sees with his own eyes the truth, his only response is, "I definitely wouldn't doubt that it would happen... I missed that one but it was a good laugh."

Donie also interviewed Alan Duke, the editor-in-chief of Lead Stories, one of the fact-checking organizations that works with Facebook. He explained that fact-checkers are not taking sides politically. He shared that some of the most vicious abuse he’s ever received were from left-wing Sanders supporters. But still, Duke said that he regularly gets death threats from Trump supporters. Duke says he replies to the hate mail and tries to have reasonable discussions with people. It’s a noble fight, but a steep mountain to climb.

Once we’ve made up our minds, we’re unlikely to change it. There is academic research to back that up, in a phenomenon known as the “continued influence effect”. Maybe facts are not enough.

But there is also contrary evidence. As Alexios Mantzarlis, formerly of the IFCN and now at Google, wrote for a module on fact-checking for UNESCO, a growing body of research “has found that when corrected especially through reference to authorities deemed credible by the audience, people become (on average) better informed.” Another study found that, "false headlines are perceived as less accurate when people receive a general warning about misleading information on social media or when specific headlines are accompanied by a ‘Disputed’ or ‘Rated false’ tag".

In 2019, researchers from the University of Maryland and Universidad Nacional de Quilmes analysed fact checks published by Chequeado during the Argentine presidential election. Working with 2,040 participants, the study collected insights on the fact checks produced by Chequeado and the impact this work had. Their finding was positive. Laura Zommer, Chequeado CEO, reflected that, "people don’t necessarily change their opinions when Chequeado says that something is wrong, but they do change their behavior. Our intervention reduces the incentive to share content that is misinformative or divorced from evidence."

Because fact-checkers are human, they will always be open to questions of subjectivity and bias. Nevertheless, the attempts to develop standards and procedures, transparency, the work of an organisation like the IFCN, all helps greatly in ensuring that fact-checking provides a much-needed service to correct the wrongs that circulate through our information ecosystem. Whether this changes people’s minds or not will likely be debated for years to come.

The strengths of fact-checking in tackling misinformation

In the Information Age, someone needs to go and literally check for truth. If we didn’t have fact-checking, we’d be in an even worse place. Disinformation loves a vacuum. Nothingness is no response. We need to have quality, correct information available for when people go looking. The “data deficits” that ensue are poisonous. When there is no good information to counter the bad information and people go searching on Google they are led to wrong and potentially harmful information. Clearly, fact-checking should serve as a bedrock, foundational layer in the strategy to flatten the curve of misinformation.

Some studies have found that fact-checking labels appearing on posts with false information is helpful in reducing the impact of the falsehood. Of course it can entrench some people further into their alternate reality, but having no such label could have a worse outcome and mean we lose those who are hesitant or struggling with what to believe.

False narratives often reappear. Therefore, existing fact-checks should have recurrent value as they get used again and again when we need to tackle falsehoods or help people become more media literate. We should be able to make the original fact-check go further through automation, artificial intelligence and machine learning. That technology is still in development, as mentioned above, but it’s likely we’ll see much more in this area in the coming years.

Let’s also remember that fact-checking can be used to keep politicians and organisations honest. Just knowing that there is a possibility of being fact-checked can stop an ever-expanding Overton Window of conspiracy thinking.

The weaknesses of fact-checking in tackling misinformation

Alas, fact-checking is, by definition, after the fact. Debunking is useful but often by then the content will have been seen by many. Finding ways to “prebunk” could be more effective.

Fact-checking is not fast. The scale of the problem we’re encountering here is huge. And it takes a lot of time for someone to research detailed claims and check them. In that vacuum, a lie can fly around the world while the truth gets its trousers on, as the saying goes. Fact-checkers struggle to keep up with the speed and quantity of falsehoods online. Would adding thousands more fact-checkers to the IFCN database help? If it would, who is going to pay for that?

Fact-checking is hard to scale. It’s not feasible to address every false claim on the internet. The human resources required to do this work means that fact-checkers will have to continue to pick and choose what to cover, along with help from algorithms. This means there will be lots of false content circulating online that they will never get to check.

Fact-checkers, and journalists generally, can struggle with WhatsApp and other private messaging services. Many of them have created dedicated WhatsApp channels for people to send them tips or flags, but that hardly helps track all of the viral falsehoods that circulate through private and semi-private groups. This is a particularly difficult question to which there is no easy answer.

Fact-checkers have become reliant on money from tech platforms to fund their existence. This could be a potential threat in various ways. Some might see this as a sign they are biased in favour of the platform. They are also at risk of losing a lot of this financing if one or two people in Silicon Valley suddenly decide fact-checkers are unnecessary.

Many fact-checkers are reliant on philanthropy. This can be helpful but also poses challenges. Every year, they must go back to the philanthropists and hope there hasn’t been a change of heart. Many fact-checkers are turning to individual donations as a way to grow, although they are hardly likely to repeat the success of The New York Times’s subscription model.

Finally, there is the obvious question: does fact-checking actually get people to change their minds? Are facts enough? There is evidence for and against. We may need to consider other tools - like empathy and what we have learned from deradicalisation experiments - if we are going to make progress bringing people back from an alternate reality.

Where to next?

In fairness, fact-checkers are aware that it is not the solution on its own.

Rather than denigrate fact-checking for “not fixing misinformation”, we should think about ways to help them, empower them, build more tools for them. Fact-checking struggles with a lack of technology. There needs to be more resources built to help them do their job better and faster, and to help them cover formats and platforms that pose challenges. There are experiments underway, as outlined above, which may help to scale the work of fact-checking in the future. These and more are all welcome.

But of course fact-checking is not going to be the answer to all our woes. The closing lines of an article about the evolution of fact-checking site Snopes seems appropriate here:

“What we need is not just facts and debunking, but a new kind of truth: a truth that kindles joy and communion, a truth that allows us to recognize our follies and laugh at them and learn from them. Where facts are no longer just a joyless pushback against disinformation, but a means of venturing forward into a less terrifying world.”

We need to think about connection. We need better storytelling skills. Helpful and truthful information should be just as engaging and fascinating as any conspiratorial theory dreamed up in a basement, or indeed the Oval Office.

The root causes of conspiratorial thinking go very deep. We need to go beyond debunking and start winning over the hearts and minds of those people who are susceptible to misinformation. This requires extraordinary empathy, and an understanding of emotion, identity, community.

That is the subject of the next post in this series.

*

Other solutions I’ll be considering in upcoming months are technology, research and partnerships, regulation, business models, the role of the media, experiments in new digital public squares, and deradicalisation.

I’d love to hear your thoughts. What have I missed here in relation to fact-checking? Are there other solutions not mentioned above that you think should be part of this? Do you have any research or reading material you’d like to share?

Thanks for taking part.